What’s in PernixData FVP’s secret sauce

Anyone who manages or architects a virtualisation environment battles against storage performance at some stage or another. If you run into compute resource constraints, it is very easy and fairly cheap to add more memory or perhaps another host to your cluster.

Being able to add to compute incrementally makes it very simple and cost effective to scale. Networking is similar, it is very easy to patch in another 1GB port and with 10GB becoming far more common, network bandwidth constraints seem to be the least of your worries. It’s not the same with storage. This is mainly down to a cost issue and the fact that spinning hard drives haven’t got any faster. You can’t just swap out a slow drive for a faster one in a drive array and a new array shelf is a large incremental cost.

Sure, flash is revolutionising array storage but its going to take time to replace spinning rust with flash and again it often comes down to cost. Purchasing an all flash array or even just a shelf of flash for your existing array is expensive and a large incremental jump when perhaps you just need some more oomph during your month end job runs.

Sure, flash is revolutionising array storage but its going to take time to replace spinning rust with flash and again it often comes down to cost. Purchasing an all flash array or even just a shelf of flash for your existing array is expensive and a large incremental jump when perhaps you just need some more oomph during your month end job runs.

VDI environments have often borne the brunt of storage performance issues simply due to the number of VMs involved, poor client software that was never written to be careful with storage IO and latency along with operational update procedures used to mass updates of AV/patching etc. that simply kill any storage. VDI was often incorrectly justified with cost reduction as part of the benefit which meant you never had any money to spend on storage for what ultimately grew into a massive environment with annoyed users battling poor performance.

Large performance critical VMs are also affected by storage. Any IO that has to travel along a remote path to a storage array is going to be that little bit slower. Your big databases would benefit enormously by reducing this round trip time.

FVP

![]()

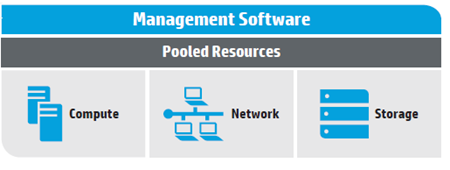

Along came PernixData at just the right time with what was such a simple solution called FVP. Install some flash SSD or PCIe into your ESXi host, cluster them as a pooled resource and then use software to offload IO from the storage array to the ESXi host. Even better, be able to cache writes as well and also protect them in the flash cluster. The best IO in the world is the IO you don’t have to do and you could give your storage array a little more breathing room. The benefit was you could use your existing array with its long update cycles and squeeze a little bit more life out of it without an expensive upgrade or even moving VM storage. FVP the name doesn’t stand for anything by the way, it doesn’t stand for Flash Virtualisation Platform if you were wondering which would be incorrect anyway as FVP accelerates more than flash.

This is great but I think SDDC is just a stepping stone to what we are really trying to achieve which is the “Policy Defined Data Center”.

This is great but I think SDDC is just a stepping stone to what we are really trying to achieve which is the “Policy Defined Data Center”.

Recent Comments