What’s in PernixData FVP’s secret sauce

Anyone who manages or architects a virtualisation environment battles against storage performance at some stage or another. If you run into compute resource constraints, it is very easy and fairly cheap to add more memory or perhaps another host to your cluster.

Being able to add to compute incrementally makes it very simple and cost effective to scale. Networking is similar, it is very easy to patch in another 1GB port and with 10GB becoming far more common, network bandwidth constraints seem to be the least of your worries. It’s not the same with storage. This is mainly down to a cost issue and the fact that spinning hard drives haven’t got any faster. You can’t just swap out a slow drive for a faster one in a drive array and a new array shelf is a large incremental cost.

Sure, flash is revolutionising array storage but its going to take time to replace spinning rust with flash and again it often comes down to cost. Purchasing an all flash array or even just a shelf of flash for your existing array is expensive and a large incremental jump when perhaps you just need some more oomph during your month end job runs.

Sure, flash is revolutionising array storage but its going to take time to replace spinning rust with flash and again it often comes down to cost. Purchasing an all flash array or even just a shelf of flash for your existing array is expensive and a large incremental jump when perhaps you just need some more oomph during your month end job runs.

VDI environments have often borne the brunt of storage performance issues simply due to the number of VMs involved, poor client software that was never written to be careful with storage IO and latency along with operational update procedures used to mass updates of AV/patching etc. that simply kill any storage. VDI was often incorrectly justified with cost reduction as part of the benefit which meant you never had any money to spend on storage for what ultimately grew into a massive environment with annoyed users battling poor performance.

Large performance critical VMs are also affected by storage. Any IO that has to travel along a remote path to a storage array is going to be that little bit slower. Your big databases would benefit enormously by reducing this round trip time.

FVP

![]()

Along came PernixData at just the right time with what was such a simple solution called FVP. Install some flash SSD or PCIe into your ESXi host, cluster them as a pooled resource and then use software to offload IO from the storage array to the ESXi host. Even better, be able to cache writes as well and also protect them in the flash cluster. The best IO in the world is the IO you don’t have to do and you could give your storage array a little more breathing room. The benefit was you could use your existing array with its long update cycles and squeeze a little bit more life out of it without an expensive upgrade or even moving VM storage. FVP the name doesn’t stand for anything by the way, it doesn’t stand for Flash Virtualisation Platform if you were wondering which would be incorrect anyway as FVP accelerates more than flash.

PernixData’s marketing story is you decouple storage capacity from storage performance. You use your existing array as a capacity tier and make storage performance the job of FVP. Your working data can be served by local flash. Also, every time you add a flash device into an ESXi host and give PernixData some money for a license you add storage performance. You can now scale storage performance the same way you scale compute. As PernixData FVP is transparent to your existing storage array, you also don’t need to move VMs to a new datastore which makes it very easy to implement without changing any of your existing processes or datastores.

Of course, PernixData wasn’t the only company looking at this problem. Other companies have released in memory caching technologies which do a similar thing. They install a virtual appliance between your storage and your ESXi hosts which uses RAM as a fast cache and with today’s super speedy CPUs can dedupe IO in near real-time reducing what you need your storage array to do.

So, what is special about PernixData’s approach?

Pedigree

Well first of all its a pedigree thing. PernixData was born out of some rather clever engineers at VMware itself. CEO Poojan Kumar was the data products boss at VMware and co-founded Oracle Exadata. CTO Satyam Vaghani, created VMFS and has also been awarded more than 50 patents including for VMware Virtual Volumes which I saw him introduce at VMworld 2011. VVols aren’t even out yet so you can get the idea that he’s pretty up to date with VM storage!

Inside the hypervisor

This in-depth knowledge has meant PernixData FVP has been written directly in the hypervisor. It is not running as a virtual appliance as most of its competitors do but installed directly alongside the hypervisor code. This isn’t an easy thing to do. Very few companies are able to build software directly into the hypervisor and VMware is rightly rather protective about the companies it lets into ESXi as they spend an enormous amount of time and money making sure ESXi is rock solid.

Cache consistency

The next and probably most unique aspect is that FVP has a very very clever cache consistency engine. Anything that is clustered somehow has to keep all the cluster members up to date with what has been written where so other members can access the latest data. This generally presents a scalability issue as the more nodes you add the more nodes you normally need to tell what is where which limits the total number of nodes possible. Maintaining cache consistency as your cluster grows becomes an ever increasing problem.

PernixData FVP has managed to create a cache consistency system without having to send messages to the other nodes. When a host adds something to the cache cluster, the other nodes don’t need to be explicitly told there is an update, they just know. This is an extremely important differentiator and unique to PernixData, patents are pending. I’d love to tell you I know how this works in detail but this is the very basis of PernixData’s secret sauce they are holding very close to their chest.

This means PernixData FVP clusters are pretty much infinitely scalable. Think thousands of nodes in a cluster, way above your current 32 host ESXi cluster limit. This scalability is such a difficult problem to solve that a number of other major storage and flash vendors have had to shelve or revisit their host flash software attempts, I don’t think VMware itself has worked this all out, their own vSphere Flash Read Cache which is baked into the hypervisor is as the name suggests read caching only.

Fault Tolerance

PernixData has also managed to create an extremely efficient FT system for their cache writes. For storage caching, when you create a write back cache system, you take on the responsibility of acknowledging the writes before they actually get passed onto the storage. You can’t take any risk of a power cut, SSD/memory failure or anything resulting in losing this write. You therefore need to store the write somewhere remotely as a form of data protection.

The time it takes to create this secondary write is extremely important as it doesn’t matter how fast your local write is, if its going to take ages to write remotely before you can acknowledge the write to the application, that is going to be the minimum write time. Making this process as efficient as possible is paramount for performance.

People may argue that whether you need to do a write to a storage array across a storage network or to another ESXi host across a VMware network, the time is the same but that’s not correct. Sending a write to a storage array is using a SCSI based protocol which is a standard developed for writing to a local disk extended remotely (simplified explanation). There is a lot of overhead with SCSI sending these packets and confirming they have been received.

PernixData FVP is able to simplify this data exchange using their own protocol and drastically reduce the speed and latency of a write to a remote ESXi hosts compared to sending the same write to a storage array. In fact quite a bit of the remaining latency is moving up and down through the ESXi stack. FVP is able to compress the IO if possible and it also works across a metro cluster where reducing the IO to send is even more important.

Flash itself has microsecond latency, but the hypervisor still adds a relatively large amount of latency. As FVP is embedded in the hypervisor, it is able to be as efficient as possible compared to running as a virtual appliance. Helpfully, VMware has been working hard on low-latency networking within ESXi so this hypervisor latency is reducing.

There is also a lot of overhead in running an external array. If you have an all flash array storage shelf containing y number of flash drives that can each deliver 50,000 IOPS, the total storage IOPS that the array can deliver is no way near the combined y x 50,000 total IOPS that your flash drives can deliver, maybe only 70%. You lose a lot of efficiency with the overhead of running storage array software.

Product or Feature?

Although PernixData FVP is great at solving current storage problems, I had thought that perhaps it was a short term fix, perhaps a future feature rather than a product. When all storage at some stage or another is flash then do the problems FVP solves go away? OK, it may still be remote storage & hobbled by a SCSI protocol latency tax or even local flash DAS but ultimately will storage be fast enough for the majority of workloads that you wouldn’t need to pay extra for the speed boost?

Well, first of all, it is going to take a long time for flash to be pervasive so there will be plenty of storage to accelerate for years to come. Think of it also like putting in a SSD into your laptop for the first time and being instantly wowed by the massive speed boost. Would you ever go back to a spinning disk in your laptop? Storage performance expectation is like that, there’s no going back and soon SSD speeds will be he new normal.

Distributed Fault Tolerant Memory.

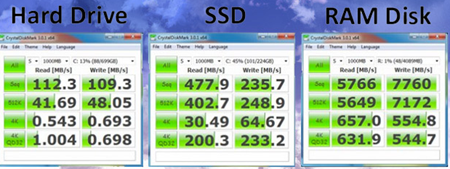

Beyond that though, PernixData FVP has not just been written to accelerate flash but also RAM memory. PernixData announced in March at Tech Field Day that they would now support creating clusters of not only flash drives but also memory, basically taking some host memory and using it as a cache. Memory is super-duper fast but has an availability issue. Memory can’t be shared between hosts like shared storage. If a memory module fails or a host fails, all its stored data vanishes. FVP can cluster these memory writes and protect them on other hosts just like with flash. With RAM you now have another even faster and highly available storage tier.

Being able to accelerate storage IO using RAM has other advantages. You don’t even need to purchase a flash drive and can use additional host memory, you don’t need to worry about supportability of a flash drive in your host or may not have an extra drive bay free if you have a a blade server. There is no driver overhead, PCI queues/bandwidth issues,storage controller config, just acceleration in memory.

The storage performance arms race has a whole new dimension to it when we start being able to use memory as a highly available clustered storage tier. Memory is only going to get faster as it moves closer to the CPU, if network latency reduction is going to get better then FVP is well positioned to run your clustered memory tier fronting your all flash array. Sure, this will take time but if your competitive advantage is crunching numbers, PernixData may help you cluster your in-memory database across hosts opening up a world of future opportunities while giving your storage array more breathing room.

VM storage has been revolutionised in a fairly short time, flash has made a massive impact in so many areas and the revolution has actually just started.

PernixData very elegantly and simply solves many existing storage issues and I’ll be very keen to keep an eye on what they are cooking up in the future.

Recent Comments