HP Virtual Connect 4.01 Update: Dual-hop FcOE support

HP has released a significant firmware update to its Virtual Connect line of HP Blade chassis switches.

This is part 2 of a 6 part post on HP Virtual Connect 4.01:

- HP Virtual Connect 4.01: What’s New

- HP Virtual Connect 4.01: Dual-hop FcOE support

- HP Virtual Connect 4.01: Min/Max Bandwidth Optimisation

- HP Virtual Connect 4.01: Priority Queue QoS

- HP Virtual Connect 4.01: SNMP and sFlow enhancements

- HP Virtual Connect 4.01: RBAC and Multicast + some more

Dual-hop FcOE support

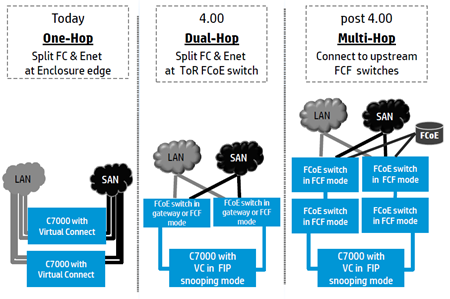

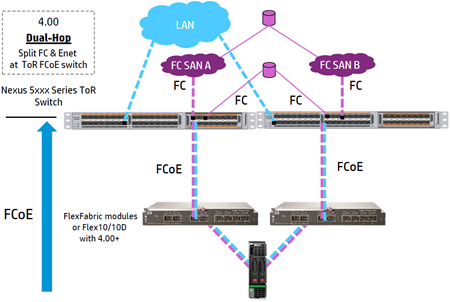

Dual hop FCoE support is a major enhancement particularly for people looking to further converge their infrastructure from the chassis to ToR switches such as the Cisco Nexus 5000 series or HPs 5800/5900 series switches. This means you can keep your existing FC SAN fabric in place and connect it to your Cisco Nexus switches along with your LAN traffic. You then only need to connect your HP blade chassis to the Cisco Nexus switches and from there the LAN and SAN traffic is split off to their relevant upstream switches.

Dual-FcOE has arrived in VC 4.01 but HP are planning Multi-Hop FcOE support sometime in the future.

(all graphics from HP, 4.01 was a minor update from 4.00)

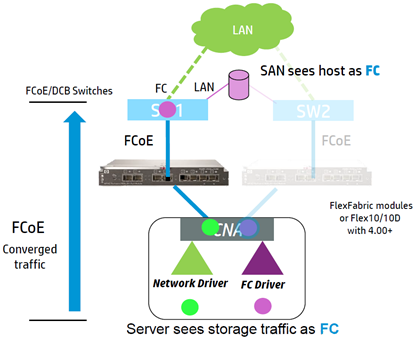

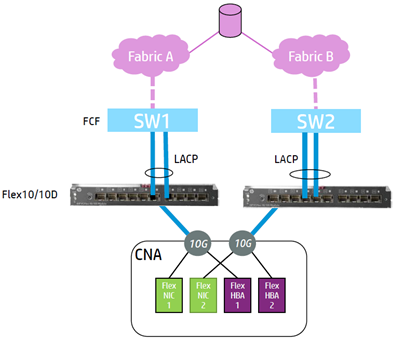

Each blade’s integrated LOM (Lan on Motherboard) Nic is split into 4 Nics called FlexNics which are seen by a blade as 4 separate physical Nics. Virtual Connect FcOE works by having one of these FlexNics dedicated as a FC HBA. The LOM will then present 3 FlexNics for Ethernet and 1 FlexHBA. The blade server sees storage traffic as pure FC.

The VC modules send and receive the converged ethernet and DCB/FCoE traffic on the uplinks out of the chassis and the Ethernet and FC traffic is then split at the upstream FCoE switch.

When converging data, care needs to be taken to manage bandwidth on the uplinks which is why the enhancements to QoS in VC 4.01 have been implemented.

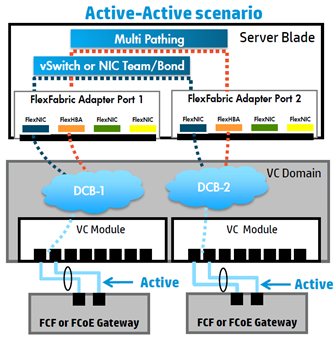

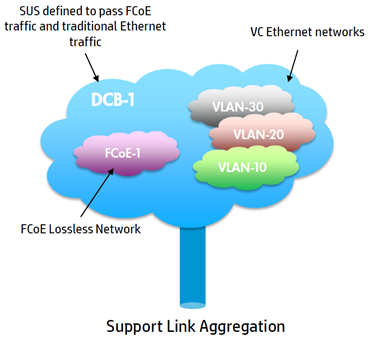

You will need to ensure you have an Active/Active Converged SUS configuration for FCoE traffic as FC requires multiple active connections which then use the normal FC multi-pathing in ESXi. This is the same model you would be using for FC already by having traditional SAN-A/B isolation.

The SUS must also only contain uplinks from a single VC module so all uplinks are active (remember, you can’t LACP across multiple VC modules). The ESXi host will then use its native Nic teaming options for multi-pathing and/or failover for the ethernet traffic. Having an Active/Active configuration is something I’ve always recommended already for ethernet traffic as there’s no point paying for ethernet ports, especially 10Gb and then not using them during normal operation. Maximise your available bandwidth whenever possible but ensure you have enough to go round when things fail.

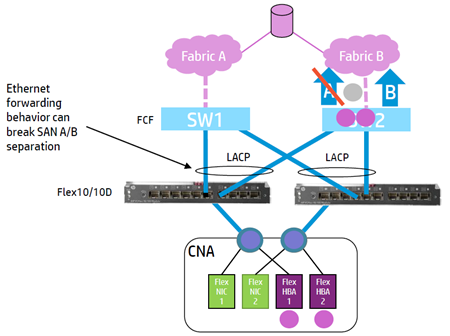

Something to bear in mind though is if you are using FCoE it isn’t recommended to use a Cisco Nexus vPC or some other technology that allows you to have dual homed LACP that span different fabric switches. This would mean VC would forward FCoE traffic on two uplinks which go to two separate fabrics which breaks the traditional SAN design fundamentals of SAN A/B isolation.

Something to bear in mind though is if you are using FCoE it isn’t recommended to use a Cisco Nexus vPC or some other technology that allows you to have dual homed LACP that span different fabric switches. This would mean VC would forward FCoE traffic on two uplinks which go to two separate fabrics which breaks the traditional SAN design fundamentals of SAN A/B isolation.

You need to use a topology with singled homed LACP to keep the different fabrics isolated so FCoE traffic from each FlexHBA is always landing on a separate fabric.

You need to use a topology with singled homed LACP to keep the different fabrics isolated so FCoE traffic from each FlexHBA is always landing on a separate fabric.

Only one FCoE network is supported per SUS and in a multi-enclosure environment, all corresponding ports in the remote enclosures will be included in the same SUS. Selecting say enc0:bay1:X1 means bay1:X1 in all remote enclosures is also included.

If you are using Cisco Nexus 5xxx switches, they can be operating as either NPV Gateway or FC Forwarder (FCF) mode but in FCF mode you should be using Cisco MDS switches as your FC SAN fabric for interoperability. Also note that SFP+ LR transceivers are not supported on FCoE VC uplinks.

There may be limits on which VC ports you can run FCoE depending on which VC switches you use. VC FlexFabric supports FCoE only on uplink ports X1-X4 while VC Flex10/10D supports FCoE on all of its uplink ports X1-X10.

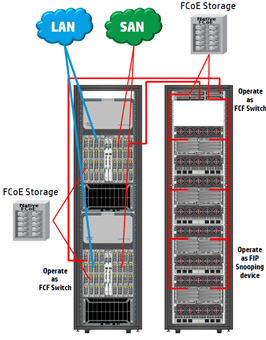

In the future HP is looking to support multi-hop end-to-end FCoE with Virtual Connect and Cisco Nexus 5xxx Switches. This will require Cisco Nexus 5xxx switches as your ToR switches operating as FC Forwarders and Cisco Nexus 5xxx or 7xxx at your Core.

You will be able to deploy native FCoE storage by connecting your SAN directly to any FCF Switch which will use FSPF and Cisco proprietary routing/flow control between the FCF switches.

Next up: HP Virtual Connect 4.01: Min/Max Bandwidth Optimisation

Great information..thanks